Chenyang Si

Associate Professor

School of Intelligence Science and Technology

Nanjing University

Associate Professor

School of Intelligence Science and Technology

Nanjing University

Chenyang Si is a Tenure-Track Associate Professor with PRLab at the School of Intelligence Science and Technology, Nanjing University (Suzhou Campus). Prior to this, he was a Research Fellow at Nanyang Technological University (NTU), Singapore, working with Prof. Ziwei Liu. Before that, he worked as a Research Scientist at the Sea AI Lab of Sea Group. He received his Ph.D. degree in 2021 from CASIA, supervised by Prof. Tieniu Tan, co-supervised by Prof. Liang Wang and Prof. Wei Wang.

His research interests span visual understanding and generation, including fundamental architectures for computer vision, video understanding, generative models, video and image generation, as well as acceleration and optimization of generative models.

Video Generation · Diffusion Models · Visual Understanding · World Models · Embodied AI · Agent · Efficient Generative Models · Evaluation Benchmarks

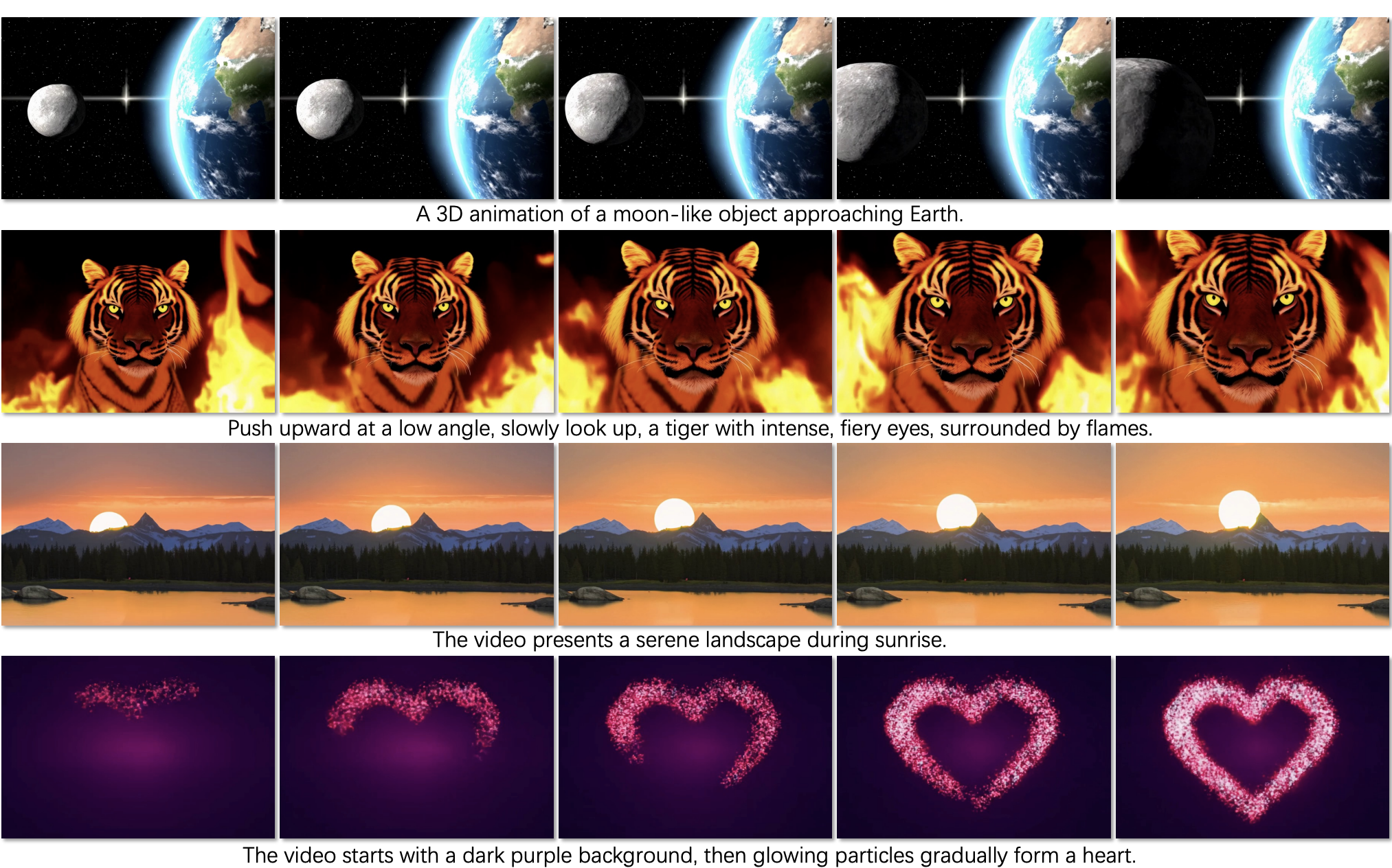

Our flagship research direction. We study generative models for high-quality and controllable video synthesis, including diffusion-based video models, consistency models, and efficient video generation architectures. Our work spans the full pipeline from foundational architecture design to practical deployment optimization.

We study the core mechanisms that enable autonomous agents to operate in open-ended environments. Our research focuses on agent memory architectures for long-horizon reasoning, agentic reinforcement learning that combines LLM-based planning with RL-driven optimization, agent sandboxes for safe and reproducible evaluation, and agent-driven social and world simulation to model complex multi-agent dynamics at scale.

We explore embodied intelligence paradigms where agents learn through physical interaction with dynamic environments. Our work focuses on integrating world knowledge from large-scale generative models into embodied systems for manipulation, navigation, and planning in real-world settings.

We build world models that capture the underlying physical dynamics and causal structure of real-world environments through video prediction and simulation. Beyond passive world simulation, we are actively exploring World Action Models (WAMs) that jointly model perception, dynamics, and action generation for zero-shot policy learning.

We pursue unified architectures that bridge the fundamental divide between visual generation and understanding within a single framework. Our research investigates how diffusion-based and autoregressive models can serve as a shared backbone for both discriminative and generative tasks, while also exploring diffusion language models to unify vision and language at a deeper representational level.

We investigate training-free and training-based acceleration methods for large generative models, reducing inference cost while maintaining generation quality.

We are actively seeking highly motivated students and researchers to join PRLab at Nanjing University. Our lab focuses on cutting-edge research in visual understanding and generation, with a particular emphasis on video generation and diffusion models. If you are interested in applying, please refer to this Zhihu Post and fill out the Google Form.

Please send your CV and a brief research statement. We look forward to hearing from you.